Tags

automation, Buckminster Fuller, jobs, Knowledge Economy, Kurzweil, labor, Society, specialization, technological singularity

The love-hate relationship of humanity with technology seems to be universal across ages. We love the benefits that technology brings, but hate it when it threatens our jobs, or forces us to learn new skills faster than our comfortable pace.

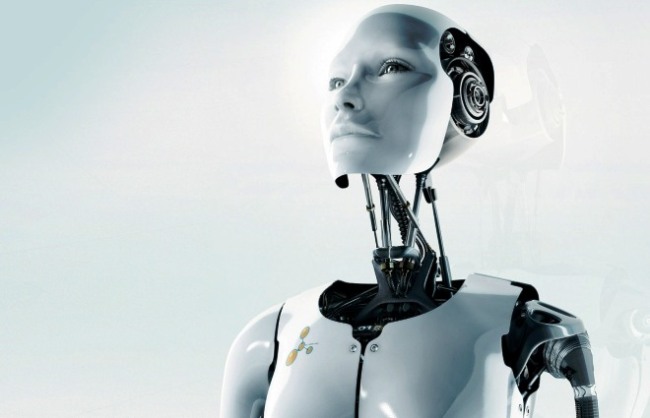

In the words of Clayton Christensen, one could say that technology is slowly disrupting human labor (and I include here knowledge work). The latest scare for humanity is the so called “technological singularity”, where artificial intelligence learns how to design improved versions of itself, and so exponentially surpasses human intelligence, leaving us humans well…irrelevant. Popular author Kurzweil predicts the year for this around 2045. Less extreme viewpoints still see automation as a major disruptive force to social order as even knowledge workers will be out of jobs in the next few decades. And so the fatalists wonder: how do we deal with the social implications of a few billion unemployed – will anarchy be the norm in 2045?

While the logical thread leading to a fatalist view of the future may seem sound, it is in fact plagued with serious flaws based in a misunderstanding of the differences between silicon and carbon-based intelligence.

As Dave Snowden, the cognitive complexity maverick, points out, humans are pattern based intelligences not information processing units. Said differently, wisdom is in the patterns and not necessarily in knowledge. Now much of the so called knowledge work is in fact information processing, where computers do have a competitive advantage, but, since they aren’t purposeful and self-aware, it can hardly be called “unfair”. By contrast, pattern based cognition is what artists employ to extract relative and subjective but nonetheless valid and valuable viewpoints of the world; it is also what thought leaders use to sense future patterns that break with the past. So, as automation might take over information processing based knowledge tasks, humanity has a unique opportunity to liberate itself into truly creative work, which takes not just knowledge but emotions and desires. So humanity’s last stronghold if you will, is creativity, which cannot fully be separated from intentionality, purpose and self-awareness. It is our ability and desire to create our future that separates us from machines. Now it is precisely because we did not have automation until the 21st century that humans had to be used for jobs machines should otherwise do, leading to sentiments of alienation, which, according to Buckminster Fuller can eventually result in severe social unrest and even war. And so, I do agree that it isn’t that automation is a threat to human relevance, but that we need to reassess the definition of human labor.

Staying with Buckminster Fuller, here’s an alternative view for Kurzweil’s world of 2045, formulated about 100 prior and likely much ahead of even our times:

“we must do away with the absolutely specious notion that everybody has to earn a living. It is a fact today that one in ten thousand of us can make a technological breakthrough capable of supporting all the rest. The youth of today are absolutely right in recognizing this nonsense of earning a living. We keep inventing jobs because of this false idea that everybody has to be employed at some kind of drudgery because, according to Malthusian-Darwinian theory, he must justify his right to exist. So we have inspectors of inspectors and people making instruments for inspectors to inspect inspectors. The true business of people should be to go back to school and think about whatever it was they were thinking about before somebody came along and told them they had to earn a living“.

Fuller’s wisdom acknowledges that man is going to be displaced altogether as a specialist by the computer.” But, according to Fuller, man is ill suited as a specialist in the first place, and specialization is not man’s highest calling: “man himself is being forced to reestablish, employ, and enjoy his innate ‘comprehensivity’“. So, Fuller’s vision is that labor specialization is counterproductive to unleashing the human potential in the first place. Fuller goes on so point out that “one of humanity’s prime drives is to understand and be understood. All other living creatures are designed for highly specialized tasks. Man seems unique as the comprehensive comprehender and co-ordinator of local universe affairs. As a consequence of the slavish “categoryitis” the scientifically illogical, and as we shall see, often meaningless questions ‘Where do you live?’ ‘What are you?’ ‘What religion?’ ‘What race?’ ‘What nationality?’ are all thought of today as logical questions. By the twenty-first century it either will have become evident to humanity that these questions are absurd and anti-evolutionary or men will no longer be living on Earth.”

As you can see, by looking beyond a simplistic view of the relationships between technology and humanity, the scarecrow factor for the future dissolves. There remains one piece of the puzzle I wisely avoided: what if artificial intelligence acquires intentionality, purposefulness and self-awareness? Well I propose that our current architectures for artificial intelligence are very far from that of the human brain. We would first need to understand what consciousness is to be able to recreate it by artificial means, if this is even possible. But at this time our computing architectures aren’t suitable for the structural complexity apparently required for conscious thought. Cellular automata hold promise, but the levels of intelligence currently achieved with these alternative computing architectures is at best primitive. In the meantime, I would urge our educational and professional establishments to focus on building creative capacity, empathy, and fun.

Photo source accessible here.

Nice summary. There’s a simple solution to your last point. Humans will build bio-silicon interfaces so that there’s a fusion the best of our creative capabilities with silicon’s number crunching capabilities. There won’t be an “us” vs. “them.” “We” will have the best of both worlds.

From the portfolio analysis work I’ve been involved in the number one issue I’ve identified for humanity is to survive the transition from human labor to automation. Civilization requires proactive policies in minimizing the disruption potential to our society so that a smooth and efficient transition can be implemented.

Unfortunately, it doesn’t seem to be on anyone’s radar.